When the AI Needed a Translator

A week. No user research. One person bridging a creative team and an engineering team that couldn't talk to each other — to ship an AI coding mentor for kids that worked.

Overview

The Mississippi Children's Museum needed an AI coding mentor for kids — C.H.A.R.L.I. (Code Helping AI Robotic Learning Instructor) — built on AWS and designed to feel encouraging rather than robotic. The creative team had the educational vision. The engineering team had the AWS infrastructure. Neither could translate their work into terms the other could act on. I was brought in with less than a week to bridge that gap — with no user research, no testing data, and no time to get either. What I did have was the specific combination of knowledge the project needed: how children learn, and how agentic AI systems are actually structured. In that room, at that moment, that combination existed in exactly one person.

The Situation

I didn't come into this project at the planning stage. The creative team reached out because they were stuck — they had a clear educational purpose and no way to communicate it technically, and the development team had built capable infrastructure with no clear behavioral direction. The museum had a deadline, a mission, and a gap in the middle that nobody on the existing team could fill.

That gap was specific: someone who understood both what a child needs from a learning experience and how an agentic AI system is actually structured. I was the only person in the room who had both.

What made it harder was the timeline. Under a week to define the UX, translate educational goals into behavioral logic, establish design standards the developers could implement, and ensure the whole thing met WCAG 2.2 AA — for an audience that would never sit still long enough to be tested.

Walking into a project at that stage means inheriting every decision that was already made, every assumption that was already baked in, and every stakeholder expectation that was already set — with no time to question any of it. You assess fast, commit faster, and hope your instincts are good.

The Constraint

The thing that kept me up about this project was the user testing problem. Normally, when you're designing an experience for children, you test with children. You watch them interact, you see where they get confused, you iterate. We had none of that. The museum couldn't provide user research. There was no time to conduct any. Every decision about how C.H.A.R.L.I. would behave — how patient, how encouraging, how directive, how much it would explain versus ask — had to be made from experience rather than evidence.

That's a significant design risk. I named it explicitly to the team — not to create doubt, but because unacknowledged risk doesn't disappear, it just becomes someone else's surprise later. The team understood. We moved forward with eyes open.

I've designed enough children's digital experiences to have pattern recognition I could lean on. But I want to be clear about what this was: educated guessing under pressure, not validated design. The outcomes were strong. That doesn't mean the process was replicable.

How I Thought About It

The first thing I did was reframe what the problem actually was. The engineering team didn't need more design assets — they needed a decision framework. What should C.H.A.R.L.I. do when a child gets something wrong? How many attempts before it offers a hint? What's the tone when a kid succeeds versus when they're stuck? These weren't questions the developers could answer, and they weren't questions the creative team had translated into anything actionable.

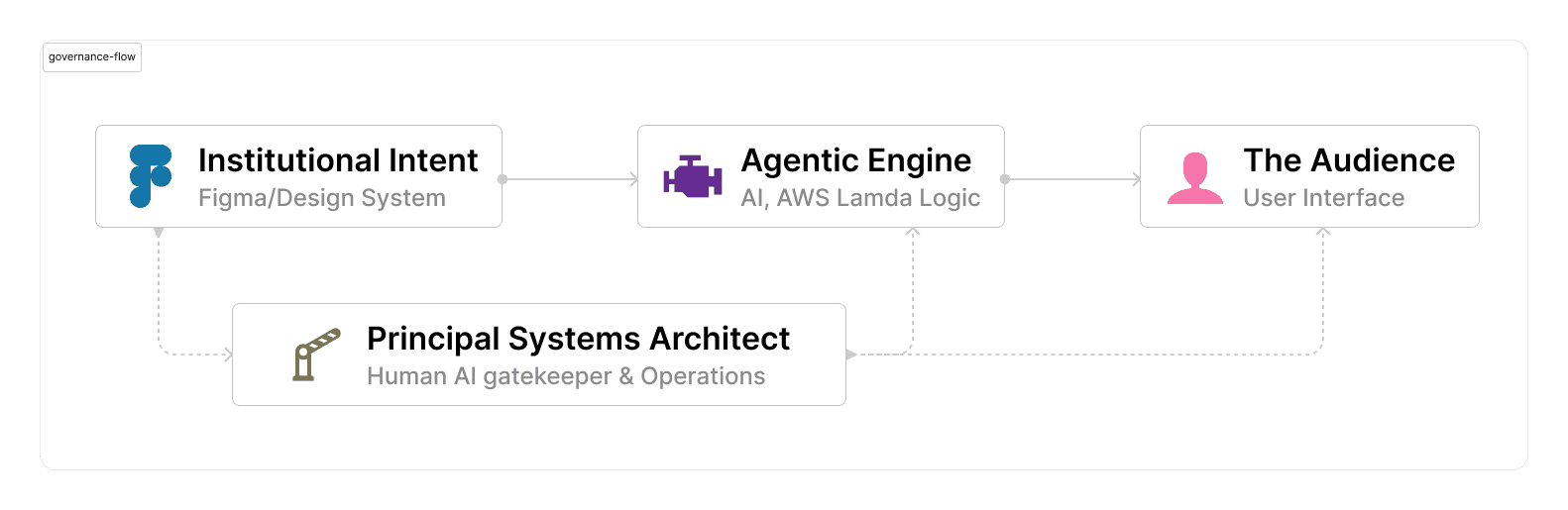

I used Figma as the translation layer — not just for visual design, but as the governance document for the behavioral logic. Every interaction pattern was mapped and annotated with the reasoning behind it, not just what to build. The token system I established wasn't primarily visual — it was a communication protocol that let developers make decisions aligned with educational intent without having to interrupt the design process to ask.

The behavioral direction I gave for the AI's personality came down to one principle I kept returning to: a good teacher never makes a student feel stupid for not knowing something. Every response pattern C.H.A.R.L.I. could generate had to pass that test. Not "is this technically correct" but "would this make a ten-year-old feel safe to try again."

For accessibility, I led the design audit in Figma against WCAG 2.2 AA. On a project with this timeline there's a temptation to treat accessibility as a final pass — something you check at the end. I built it into the component standards from the start, which is the only way it actually holds when developers are moving fast. Accessibility on a children's product isn't just a compliance requirement — it's a statement about who the institution believes deserves access to what it's building. At a children's museum, that answer should be every child. I built that answer into the foundation so nobody on the team would have to make that decision under pressure later.

What I Built

The primary deliverable was a design system and behavioral logic framework that gave the engineering team enough direction to execute without requiring constant design oversight. Everything I built was designed to outlast my involvement — documentation and decision frameworks that the team could carry forward without me in the room. Under a week means you won't be there to answer questions later. The work has to speak for itself.

Component standards and interaction patterns in Figma — including age-appropriate visual design, motion guidelines, and error states — with documented reasoning the developers could reference independently. Not just what to build, but why it was built that way, so the next decision down the line could be made with the same intent.

Behavioral logic maps translating educational goals into if-then decision trees for the AWS agentic layer. I didn't write the Lambda functions or configure Bedrock — I defined what the AI should decide and why, in terms a developer could act on without needing a design degree to interpret.

A governance framework for C.H.A.R.L.I.'s voice and behavior — guardrails ensuring the AI stayed within its educational mandate and never generated a response that would confuse, discourage, or alienate a child. The engineering was capable. My job was making sure capability served kindness.

WCAG 2.2 AA compliance woven into the component architecture from day one — not as a checklist at the end, but as a baseline assumption every element was built against. Every child deserves access. That's not a feature. It's a given.

The Outcome

Three months post-launch:

- 50% reduction in operational expenditure — the governance framework and agentic logic reduced the need for human facilitation that had originally been scoped into the delivery model

- 40% faster project velocity — the Figma-to-development direction eliminated the translation bottleneck that had stalled progress before I joined

- 30% increase in user engagement — keeping the educational purpose central to every interaction decision drove measurably higher institutional impact

The Mississippi Children's Museum had a working, accessible, brand-consistent AI coding mentor for kids — built under significant constraint and delivered on time.

Those numbers matter. But what they don't capture is what it means to ship something for children — knowing that a kid somewhere is going to sit down with C.H.A.R.L.I. and either feel capable or feel lost, and that the difference between those two outcomes lived in decisions made under a week of pressure with no user research and a team that had never worked together. It worked. That's not nothing.

What I'd Do Differently

I would have pushed harder for even one round of user testing with actual children before launch, even informal observation. The behavioral decisions I made were grounded in experience, and they worked — but "it worked" and "we validated it" are not the same thing, and I knew that going in. With another week and access to the museum's programming, I would have found a way to watch kids interact with a prototype before the final build was locked.

I also would have documented the behavioral logic framework more thoroughly during the build rather than reconstructing it afterward. When you're moving fast, documentation feels like overhead. It always costs you later.

The constraint of being brought in at the last moment is something I couldn't have changed — that's the condition I was handed. But within that condition, the one thing fully in my control was how thoroughly I left the work documented for whoever came after me. I could have done that better. What I know now is that documentation isn't the last thing you do on a fast project — it's the thing that determines whether the fast project becomes a foundation or a footnote. C.H.A.R.L.I. deserved a foundation. Next time, I'll build that in from day one regardless of the timeline.

The framework is documented.

The governance laws, agentic behavior trees, and DesignOps specifications from this project are publicly available — including the Figma-to-AWS design token bridge and the AI behavioral guardrails.

View the frameworkWorking on something that needs this kind of thinking?

I help teams build systems that scale — and the governance to keep them working.